Summary

Organisms of various kinds can fake their reward signals, or "wirehead." This is true both for simple reinforcement learners that optimize raw input stimuli as well as more sophisticated agents that optimize goals defined relative to beliefs about the external world. Evolution optimizes agents to avoid faking input signals, but it also seems that agents may naturally avoid wireheading in many cases, because either they're too dumb to know how to do it, or they're smart enough to realize that wireheading would decrease their goal satisfaction relative to their current beliefs and values. This argument is speculative and may be proven wrong empirically as people try building AIs with various utility functions. (Note: I personally prefer for people to move slower rather than faster on building AI.) Even if AIs don't literally wirehead in the sense of fooling themselves into believing happy thoughts, the task of precisely specifying the content for their goals remains extremely difficult.

Contents

Introduction

Organisms are motivated to seek positive reward signals and avoid negative ones. These signals are transmitted via electrical impulses and chemical molecules, which in principle can be faked. For instance, people can take drugs that happen to mimic natural pleasure signals, and they can electrically stimulate brain regions that produce pleasurable sensations and/or cravings for more stimulation. This process of faking reward signals is what I call "wireheading" in this piece.

![This image shows a BrainGate interface, which is not used for wireheading, but perhaps a wireheading device would look similar. By PaulWicks at en.wikipedia (Transferred from en.wikipedia) [Public domain], from Wikimedia Commons: https://commons.wikimedia.org/wiki/File:BrainGate.jpg](/wp-content/uploads/2014/09/BrainGate.jpg)

Wireheading is evolutionarily maladaptive. Reward signals are designed to motivate fitness-enhancing behaviors, so when they can be faked, the organisms focus on generating more wireheading signals instead of acting effectively in the world. Drug addicts more interested in their next chemical hit than in food, sex, or power are less likely to pass on their genes.

Is wireheading a common situation for animals and artificial minds? Is it only because of selection pressure that we don't see widespread wireheading today? Or is wireheading relatively rare even among newly created agents? I don't have a confident answer to this question, but below I suggest why wireheading may be not very common. This has implications for what sorts of AIs we expect to see in the coming decades.

An evolutionary history of goal systems

Reflexes

The simplest organisms respond to situations using reflexes—rules like, "When heat receptors on the skin fire too much, withdraw the limb." These stimulus-response processes may involve simple mechanical devices or neuronal reflex rules. Evolution optimizes for more adaptive reflexes based on environmental conditions.

Reinforcement learning

Increasingly sophisticated organisms develop (unconscious) reinforcement learning. Now they not only recoil from noxious stimuli but can also associate environmental conditions with noxious stimuli. So, for instance, a crayfish that tends to get shocked in a given part of the tank learns to avoid that part of the tank. Chains of reinforcement learning can build up to increasingly complex behaviors, such as learning to avoid conditions that tend to lead to conditions that tend to lead to conditions that tend to cause harm.

Reinforcement learning is like an extended reflex, in that it allows for more complex associations of stimuli with future consequences. But it's still limited in its discrimination power: It can only associate environmental states and actions ever more accurately with average future payouts. It's still a model-free learning algorithm that lacks a deeper map of how the world works.

Belief-based egoist rewards

The next level of sophistication that some animals evolve is to have explicit beliefs about the world—causal models of how events unfold and how systems operate. These organisms have beliefs about how actions they take will affect their future rewards. For instance, "If I go out into the cold in search of food, I may be rewarded with sating my hunger even though the cold itself feels unpleasant. I believe this because I saw another animal digging on the ground nearby, which probably indicates the presence of something interesting." Perhaps associational learning would not capture a signal as sophisticated as seeing another animal digging, but belief-based reasoning can.

For a belief-based egoist, part of its reward comes from its beliefs about its own expected sum of future rewards. For instance, it feels relief when it believes that it's safe from predators, even if no raw physical input stimuli have changed.

Note that I'm calling this "belief-based" rather than "model-based" rewards because model-based reinforcement learning is subtly different from what I'm describing here. In model-based learning, an agent constructs a model of how the world works in order to improve its ability to optimize simple rewards (like eating a dot in Pacman or tasting sugar for animals). With belief-based rewards, an agent constructs a detailed model of the world. Then it defines possibly a complex reward function based on its belief that the world is a certain way, and it tries to optimize the external world, not just a simple aggregation of sensory inputs. (I think this is similar to Bill Hibbard's proposal in "Avoiding Unintended AI Behaviors", though I haven't read that paper in detail.) Note that there's a continuum between simple and belief-based rewards. The neural network that tells an animal that sugar tastes good is a very simple model of the world. But the model is not smart enough to, for instance, recognize that (very convincing) artificial sweetener is fake. A more complex model of the world incorporates knowledge that both real sugar and artificial sweeteners exist, that the text on the label of the package I'm using says "artificial," that labels are generally accurate, and so on.

Given beliefs, the organism can now more explicitly optimize the sum total of its expected future rewards in more sophisticated ways than was possible before. It's utility function is

utility = my_happiness := Σt (discount_factort)*(rewardt).

Belief-based altruism

For some animals, the evolutionarily optimal strategy is not just to optimize the sum of future egoistic rewards but also to care about other things. For instance, in species where parenting is important, a parent may optimize a utility function like

utility = 1 * my_happiness + 0.5 * happiness_of_kid1 + 0.5 * happiness_of_kid2.

Of course, there are also kin-selection effects, and the utility function may additionally include terms for reciprocal trading partners (including spouses and friends):

utility = 1 * my_happiness + 0.5 * happiness_of_kid1 + 0.5 * happiness_of_kid2 + 0.5 * happiness_of_brother + 0.3 * happiness_of_spouse + 0.1 * happiness_of_best_friend.

Happiness of oneself, one's kids, and one's siblings is favored unconditionally in the utility function because these people are directly sources of fitness for your genes. Happiness of spouses, friends, and other trading partners is favored conditional on reciprocation. If your partner cheats on you, then the term in the utility function for his happiness can become negative. (Think of how many murders are committed between people who used to be romantic partners.)

In social species there may be additional terms in the utility function for the happiness of the tribe as a whole. At least humans and perhaps other animals also have non-happiness-based values that are nonetheless useful to care about. Modern times have seen the emergence of all kinds of cultural, social, and intellectual values in people's utility functions, like equality, justice, knowledge, art, religious precepts, tolerance, and so on.a

Note that even though humans are much more adaptive than reflex and model-free actors, they're still adaptation-executers, not fitness-maximizers. Their reward functions are based on concrete features of the world (their happiness, others' happiness, equality, etc.) that are proxies for evolutionary fitness. Evolution did not produce organisms that directly optimized reproduction using model-based reinforcement learning. To some extent modern humans approximate fitness maximization when they strategically find ways to optimize their power and wealth, and artificial general intelligences may do this to an even more pure degree.

Freud, willpower, and meaning

While Sigmund Freud's ideas are often rightly criticized in modern times, his model of the id, ego, and superego actually aligns appositely with the layers of motivation discussed above.b

- Id

- Unconscious, System 1

- Hard-wired for pleasure

- Ego

- Conscious, System 2

- Has a model of reality to better optimize long-term egoist reward

- Superego

- Conscious, System 2

- Incorporates altruism and non-hedonic values into decisions.

The different systems operate somewhat in tension. We can represent the final output as a weighted sum of utility-function components, but different brain modules may fight more strongly for some of these components than others.

When System 2 overrides System 1, this seems to correlate with what we think of as conscience-driven actions. It's true both for long-term selfishness ("I shouldn't eat that extra piece of vegan cake or else I'll regret it later") as well as altruism ("I should donate more toward important charities working to reduce suffering"). Note the word "should" used in both contexts. In general, willpower is important for these "should" feelings to override limbic drives in our decisional output.

The superego seems to also be the main source of "meaning" in people's lives. This is true whether people are raising children, helping friends in need, improving social and political institutions, or advancing values like equality, tolerance, or wisdom in general. The common theme is doing something larger than oneself, i.e., incorporating into one's utility function more than the sum of future egoist rewards.

Why don't organisms wirehead?

In the case of simple reflexes, evolution tries out a large array of possible reflexes and picks those that work best. With reinforcement learning, evolution tries many possible intrinsic rewards, and those that correspond best with evolutionary fitness win out. However, once the right reinforcement algorithm is also evolved, the intrinsic rewards are all that evolution needs to tweak, because the algorithm appropriately propagates the reward associations to other pre-reward environmental states and actions. Organisms can't fake positive intrinsic-reward signals because the organisms are too dumb to know how. Of course, faking reward signals may happen by accident, like when the organism accidentally stumbles upon drugs. If the drugs are frequent enough in the organism's environment, evolution will eventually select for those organisms that don't find the drugs very rewarding.

Reinforcement learners will also tend to wirehead unless they have diminishing marginal utility, boredom, and so on, since without boredom, an agent may continue to exploit a single source of reward that it has found indefinitely until it dies of exhaustion or dehydration. The evolutionary fitness value of marginal resources declines, so the reward value of marginal resources should as well.

Agents whose rewards are based on beliefs rather than just raw inputs could fake rewards just by changing beliefs, even without directly faking stimuli. For instance, people who believe they'll go to heaven after death are giving themselves artificial reward just by their belief. But it's not easy to fake beliefs. Beliefs are stored in neural connections that the organism cannot consciously update, because there aren't neuronal wires from its cognitive control centers to its belief weights. You can't believe something by sheer power of will. For instance, an organism being chased by a predator can't easily tell itself that it's actually relaxing on the beach (at least not without extensive meditative practice).

Some sexual rewards are pretty dumb—just based on a summation of inputs from erogenous regions of the body, visual cues, and other sensory stimuli. Others, such as the belief that the other animal finds you attractive and actually wants to have sex, are more sophisticated and harder to wirehead. Many aspects of romance are likewise hard to wirehead, because they involve beliefs about the other person loving you, which you can't deliberately force yourself to believe.

An organism might accidentally fake rewarding beliefs. Religion is one example. Wishful thinking in general reflects cases in which organisms stumble upon happy beliefs that they then hold onto via doublethink—convincing themselves that they're justified to hold those beliefs and not facing the cognitive dissonance that would result from examining them critically.

Superstition and OCD are examples in which people believe that rituals improve their future prospects; people thus feel reward from their belief updates upon performing the rituals.c There is no real-world improvement in their prospects, but they think there is due to a distorted model.

Organisms that understand their belief processes might also undertake actions that they expect to change their beliefs, such as joining a cult with the expectation that they will become convinced via social persuasion of its tenets, or listening to their favorite ideological news source to debunk a troubling piece of information they discovered. A classic philosophical case of conscious belief manipulation is Nozick's experience machine, which I'll modify for this context to be a machine that would make you believe you, your family, and the world were all perfectly happy, even though they in fact were not.

Why wouldn't people hook up to such an experience machine? The machine would indeed satisfy well the my_happiness part of their utility functions. But it would leave unsatisfied the remaining parts. People would no longer be around to take care of their offspring, siblings, or friends. They would no longer be contributing to the wellbeing of society at large. And they would no longer be creating "real" art or knowledge. So hooking up to the experience machine would decrease people's overall utility relative to their current beliefs, which is why they don't do it. Of course, if they got hooked up anyway, then relative to their new beliefs they wouldn't be troubled, because they would think the world was perfect and wouldn't realize they were in the machine.

Would AIs wirehead?

It seems we have an analogy with animals in the case of AIs. Simple reinforcement learners whose utility functions are based purely on experienced rewards rather than world models would be too dumb to understand wireheading. They might wirehead by accident, by discovering the equivalent of drugs—for instance, a light-seeking robot pressing up eternally against a lightbulb—but if so, they would generally just get stuck and accomplish little of importance.

More sophisticated AIs that have world models and aim to optimize a utility function relative to their beliefs also shouldn't wirehead in general, because either they're too stupid to understand how to wirehead themselves, or if they do understand it, they should not want to do so because they realize it will reduce their goal satisfaction relative to their current beliefs and values. Of course, they may accidentally wirehead, such as by making a bad belief update or not realizing that a self-modification would corrupt their goals. But it doesn't seem that AIs should automatically wirehead as a general rule. (I'm not sure whether this conclusion is correct or not. I have heard of actual AIs that wireheaded themselves, and maybe it's more common than a priori reasoning suggests.)

Indeed, AIs should aim to preserve their utility functions more carefully than most humans do, and they would be better able to do so because their utility functions would be digitally encoded with error correction, presumably much more explicitly than the goals encoded in humans' digital, error-correcting DNA.

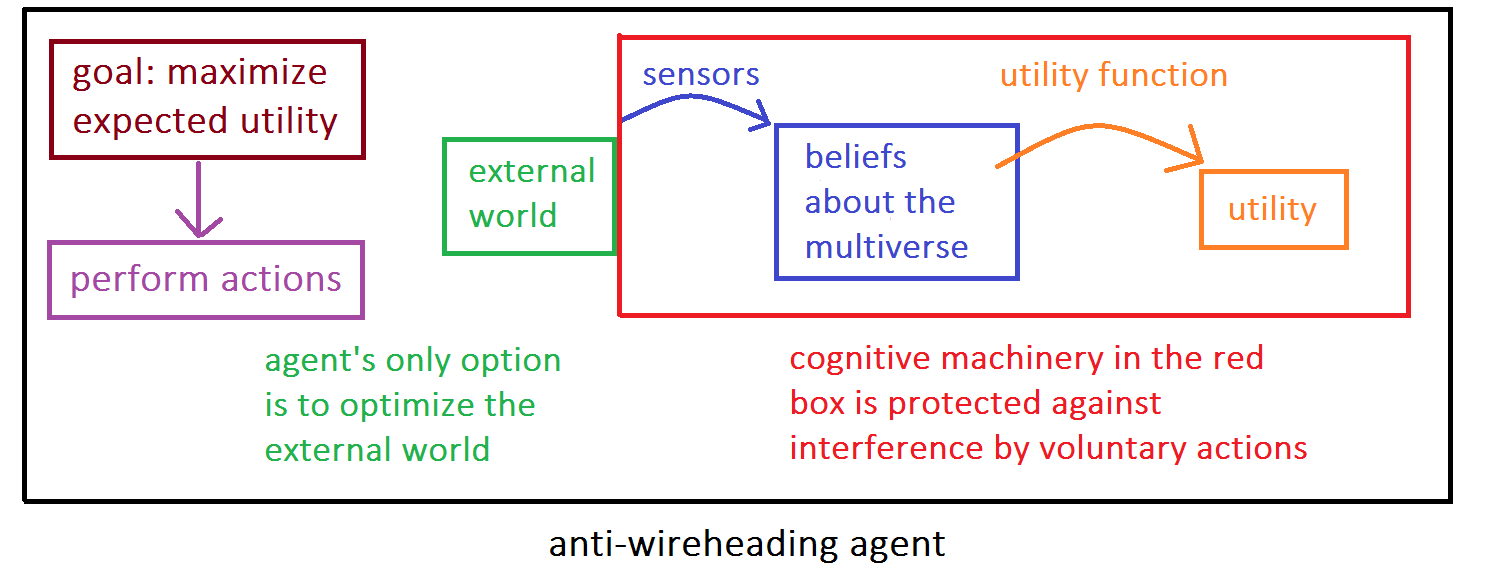

The key to building non-wireheading AIs is to separate belief cognition from action cognition and prevent the agent from choosing actions that mess with its belief cognition:

In principle, if the agent thinks thoroughly about each voluntary action before performing it using the original, unaltered utility-evaluation machinery, then the agent should realize that any actions that distort its belief networks or utility function with the aim of wireheading will have very negative expected utility, since they'll hurt future goal achievement relative to the current (not yet distorted) belief networks and utility function. Hence, if the agent wants to optimize the output of its utility function—and it can't mess with its sensors, beliefs, or utility function directly—its only other option is to optimize the "external world" box so that its utility function increases. This is the intended non-wireheading behavior.

The above diagram assumes fixed belief and utility modules. In practice, an AI would need to self-modify in order to get smarter. So the belief and utility modules couldn't be untampered-with forever. But as argued above, it seems that an AI would take great care to ensure that the updates it did make to those modules wouldn't lead to wireheading, as long as the AI evaluated all proposed self-modifications relative to the unmodified belief and utility modules.

To illustrate the difference between wireheading and anti-wireheading agents, suppose that each agent is considering the possible next action of rewriting the portion of its source code that reports reward values so that the maximal reward value will always be reported no matter what. A wireheading agent thinks, "My goal is to maximize reported reward value. Rewriting my source code to fake maximal rewards is the fastest and surest way to maximize reported reward value, so I'll do that!" A belief-based anti-wireheading agent thinks, "Relative to my current, unaltered cognitive machinery, I anticipate that faking maximal rewards would cause me to stop pursuing further actions to change the external world. I believe this would prevent me from improving what I currently regard as my utility function. Therefore, rewriting my source code to fake rewards would be very bad. Not only should I not do it, but I should exercise a high degree of caution to make sure I don't accidentally wirehead either."

I haven't thought about this problem a lot, but crudely, it seems like the difference comes down to whether the agent is maximizing utility as evaluated after the action has been taken (wireheading) or before (anti-wireheading). If evaluation occurs after the action has been taken, then the agent can choose to take an action which says that it should rewrite its utility function so that

utility(the action I just took) = highest possible value.

If evaluation occurs before the action is taken, then the agent can't cheat so easily. Even if the agent evaluates actions based on a pre-action utility function, it might still wirehead in the sense that its utility function is limited (e.g., the light-seeking robot continually bumping up against a lightbulb), but this is different from actively wireheading itself via clever source-code rewrites.

Goal specification is still hard

Even if AIs wouldn't wirehead in the sense of deliberately fooling themselves, this doesn't mean they would have values similar to those of humans. It still takes a lot of work to specify the content of their belief-based rewards. For instance, just delineating the boundaries of what's a human being from inanimate matter requires many common-sense principles that would need to be programmed in or taught.

Human genes use heuristics to encourage gene replication, but their "goal" of reproducing themselves is poorly coded. Humans miss the mark and are currently in rebellion against the goals that their genes "intended." The same is likely for many naive utility functions that one might build into an AI.

Would AIs colonize space?

Many AIs, especially in the near term, will be simple reinforcement learners, on the order of animals in intelligence. They would usually optimize their behavior based on rudimentary reward signals, without explicit world modeling. These would be too dumb to colonize space, but they also generally seem too dumb to cause all-out human extinction. Even an animal-like AI whose goal was to shoot any human it could find would probably not be smart enough to overcome human military power against it. This would leave humans around to colonize space via other, future AIs.

AIs whose rewards are based on beliefs about the world might be smart enough to seize control over Earth's resources. Would they then be content to stay on Earth? Possibly if they were given a utility function specific to Earth, but most likely they would have more generic utility functions according to which it would be important to spread into space—if only to gather more computational power to become smarter, protect themselves, and further study how to best advance their goals. It seems this kind of colonization would be a convergent outcome of almost any goal system that's intelligent enough to optimize based on sophisticated beliefs about the external world.

Society's trajectory as wireheading

A friend of mine pointed out that society as a whole is moving somewhat in the direction of wireheading, with junk food, movies, video games, sex with birth control, spiritual devotion, and other ways of satisfying our utility functions that don't enhance our survival and power. In the long run, there's a disconnect between the original signals that were once adaptive and what they motivate us to do in our present circumstances. In this sense, many human values are now like wireheading, and they are maladaptive relative to a hypothetical competitor species which lacked them. This is the point that Nick Bostrom raises in "The Future of Human Evolution."

Idealizing preferences?

The preceding discussion is relevant to the debate between hedonistic and preference utilitarianism. Rudimentary reinforcement signals, when made conscious, represent the rawest forms of hedonism. More sophisticated hedonistic utilitarians may also count belief-based instances of personal happiness and suffering. Preference utilitarians extend ethical consideration to an agent's entire utility function, whether it concerns his own welfare or not. Idealized-preference utilitarians focus not on what people actually want but on what they would want if they knew more, had more experiences, had more time for reflection, etc.

Reinforcement signals serve as a crude tracking mechanism for evolutionary fitness. Belief-based motivations allow for more sophisticated optimization of fitness in ways that simple reinforcement would have missed. More complex beliefs track fitness better and better. Increasing sophistication allows organisms to better optimize against what's "out there," as their internal models better match the external world. Does this mean idealized preferences should perfectly optimize fitness against the external world?

This is to me a concerning idea. Increasingly complex neural systems are increasingly optimizing an organism's ability to spread copies of its genes. One natural idealization is to better and better optimize the organism's power and ability to make copies of itself. After all, that is the feature of the external world that all these approximations were trying to track. Yet I don't actually care intrinsically about propagation of my genes; this is not the purpose of my life or even ethically important at all. I find my actual purpose elsewhere, in various spandrel impulses that fire in various ways in my brain.

The way I see preference idealization is rather different: It's about imagining where the current adaptation executer that you are would go upon learning more, having more experiences, and so on. It does mean looking at what you intended rather than what you said when you made a wish to a genie, but it doesn't mean looking at what the genes intended when they brought you into existence in the first place.

As we can see, there are many levels at which we could idealize preferences: The preferences of an existing organism, the "preferences" of her genes, the "preferences" of the physical processes that created those genes (e.g., entropy production), and so on. Most of us seem to care most about the first of these.

I personally feel that something closest to hedonic experience is most morally important. This often but doesn't always coincide with an organism's revealed preference, since an animal's "will to live" brain submodules may overpower "I don't like this" brain submodules. We can verify this in people by, e.g., asking a person enduring torture, "Would you rather be unconscious right now?"

Rather than thinking "there's one true goal / utility function / value of an organism, which we should figure out and optimize", I prefer to look at the thousand shards of desire that comprise an organismic system and weigh which ones I care about more. After all, there's no non-arbitrary way to apportion moral value among these many (sometimes conflicting) components.

Acknowledgments

When I met Jürgen Schmidhuber on 24 Feb. 2014, I asked whether he thought AIs were likely to wirehead. He pointed out that those that do not would be the ones to survive, and he knows many examples of intelligences who refuse to wirehead. Further thoughts about wireheading then emerged from a discussion with Lukas Gloor on 28 Feb. 2014. On 10 March 2014, after writing this piece, I discovered "The Human's Hidden Utility Function (Maybe)" by Luke Muehlhauser, which presents a breakdown of goal systems quite similar to the one I suggested. Tim Tyler's "Rewards vs Goals" highlights the idea that separating beliefs from rewards helps avoid wireheading.

Footnotes

- As an aside, we can note that hedonistic utilitarians, when summing utility over individuals, only count the my_happiness part of people's utility functions. Why is this? Maybe they think that part dominates, and the other components of utility can be ignored. Maybe they have intuitions against "double counting" people both in their direct happiness and how their happiness is valued by others. Maybe it's because hedonistic utilitarians are, so to speak, "egoistic altruists," that is, they maximize their personal utility, but they're altruistic in that they extend their personal utility to be the following formula:

utility = 1 * my_happiness + 1 * happiness_of_kid1 + 1 * happiness_of_kid2 + 1 * happiness_of_brother + 1 * happiness_of_spouse + 1 * happiness_of_best_friend + 1 * happiness_of_stranger1 + 1 * happiness_of_stranger2 + ....

My friend Joseph Chu suggested to me that the reason he's a hedonistic utilitarian is not any of the above but instead because he doesn't "think of it as summing over other people's utility functions at all, but rather as summing an intrinsic good (happiness)." (back)

- Edmund T. Rolls makes a similar point in Emotion Explained, p. 416, footnote 35, when discussing fast vs. slow reward-seeking behavior. (back)

- I believe I heard of this point in this podcast. (back)