Summary

This piece outlines some arguments for and against the view that the ethical importance we place on suffering and happiness depends on the size and complexity of the brain experiencing them. In favor of weighting by an increasing function of brain size are the observations that a brain could be split in half to create two separate individuals and that big brains perform many parallel operations. The approach favoring size neutrality points out that an individual organism can be interpreted as a single, unified agent with its own utility function, and that to a tiny brain, an experience activating just a few pain neurons could feel like the worst thing in the world from its point of view.

I remain genuinely undecided on the question, but I think it's clear that neither pure size weighting nor pure equality weighting is quite right. At the very least, small brains plausibly deserve more weight than their relative number of neurons because small animals are more optimized for efficiency. On the other hand, large brains could contain subcomponents that are sufficiently isolated as to resemble small individual brains.

Note: In this piece, I unfortunately conflate "brain size" with "mental complexity" in a messy way. Some of the arguments I discuss apply primarily to size, while some apply primarily to complexity. When we look at animals collectively, there is a correlation between brain size and cognitive complexity; for example, primates and cetaceans have both bigger brains and more sophisticated minds than insects. However, there may be many cases where this correlation does not hold. Proctor (2012) argues that "Total brain size has also been shown to be a poor indicator for both intelligence and sentience, and many now argue that it should be the complexity of the brain’s function that is considered in regards to welfare, rather than its size."

Contents

- Summary

- Introduction

- No objective answer to this question

- Biases affecting our judgments

- Arguments for brain-size weighting

- Arguments for equality weighting

- Reductio against equality? Binary utility function

- Big brains are not just many copies of little brains

- Beauty-driven intuitions

- Mind boundaries are not always clear

- Small brains matter more per neuron

- Other measures besides number of neurons

- Counting independent subcomponents?

- Physical or algorithmic size?

- Do "real" brains matter more than simulated?

- Intuitions about a China brain

- Value pluralism

- "Unequal Consideration" vs. "Unequal Interests"

- Acknowledgments

- Footnotes

Introduction

And the poor beetle, that we tread upon,

In corporal sufferance finds a pang as great

As when a giant dies.

A person's a person, no matter how small.

We have strong intuitions that every individual deserves equal moral consideration. "All men are created equal," said the US Declaration of Independence. "[E]verybody to count for one, nobody for more than one" was a slightly misquoted dictum from Jeremy Bentham. This works well enough in the human domain, where most humans are structurally similar. But how do we extend it to animals?

The standard approach among animal-rights philosophers seems to be to defend pure equality for non-humans as well. So, for instance, a mouse in pain deserves equal moral weight for that pain as a dog or a whale experiencing a similar level of distress. On the other hand, laypeople have strong intuitions that bigger animals matter more; for instance, even if an ant can consciously suffer, its suffering doesn't matter as much as that of an elephant.

Even people who profess to believe in equality for all animals are likely in practice to be more upset at, say, the killing of a dolphin than the killing of a minnow. As the pigs decreed in the last chapter of George Orwell's Animal Farm: "All animals are equal, but some animals are more equal than others."

No objective answer to this question

One point to clarify at the outset is that this question has no "right" answer because it's fundamentally a moral question. "Conscious emotion" is a category !['Human brain - left and right hemispheres - superior-lateral view.' By John A Beal, PhD Dep't. of Cellular Biology & Anatomy, Louisiana State University Health Sciences Center Shreveport [CC-BY-2.5 (http://creativecommons.org/licenses/by/2.5)], via Wikimedia Commons: https://commons.wikimedia.org/wiki/File:Human_brain_superior-lateral_view.JPG](/wp-content/uploads/2014/09/Human_brain_superior-lateral_view.jpg) into which we place mental operations, and "amount of conscious emotion" is a normatively laden metric that we decide upon, based on features of the mind that we find morally compelling. We can make arguments to each other that one or another metric jibes better with our intuitions, but nobody is compelled to accept a given weighting scheme; it's up to your heart to decide.

into which we place mental operations, and "amount of conscious emotion" is a normatively laden metric that we decide upon, based on features of the mind that we find morally compelling. We can make arguments to each other that one or another metric jibes better with our intuitions, but nobody is compelled to accept a given weighting scheme; it's up to your heart to decide.

In the remainder of this piece, I sketch arguments on both sides of the debate. I remain very unsettled, and this is one of the issues on which I have highest moral uncertainty. I think many neuroscience-inclined people tend to assume size weighting because their business is neurons and synapses, so those seem like the natural things to focus on. However, this ignores the alternate view of a mind as a unified agent with its own utility function, no matter its size.

Biases affecting our judgments

Demandingness

People have a vested interest in disregarding small brains because, frankly, life is a lot harder when you have to worry about them. The possibility of giving equal weight to mice and minnows, much less insects, is not a thought most people want to entertain. This should make us somewhat suspicious of size-weighting views, although one could just as well say that the widespread intuition that insects matter less is a demonstration that many people do in fact care less about smaller brains. Still, consider what we would think if we were the tiny ones. Would we be okay with giants squishing us because they couldn't bother to watch where they were stepping?

Stuart Russell and Peter Norvig actually raise this point in Artificial Intelligence: A Modern Approach:

We can't just give a program a static utility function, because circumstances, and our desired responses to circumstances, change over time. For example, if technology had allowed us to design a super-powerful AI agent in 1800 and endow it with the prevailing morals of the time, it would be fighting today to reestablish slavery and abolish women's right to vote. On the other hand, if we build an AI agent today and tell it how to evolve its utility function, how can we assure that it won't read that "Humans think it is moral to kill annoying insects, in part because insect brains are so primitive. But human brains are primitive compared to my powers, so it must be moral for me to kill humans."

The mini-series Delete echoes this idea: In it, the world-conquering AI reminds protagonist Daniel Gerson that he too was willing to crush ants in his ant farm if they challenged his authority.

DeGrazia (2008) acknowledges that the burdensomeness of caring for insects may be a factor in our assessing them as less morally relevant, conditional on their being found sentient (pp. 194-95):

But suppose, as surely is conceivable, that evidence emerges that compellingly supports the assertion that insects are sentient. Would that oblige us to work very hard not to harm insects? Presumably not; any such obligation would seem excessively demanding. Yet if all sentient beings have equal moral status and insects are sentient, it would seem that we would be obliged to take insects quite seriously indeed. This is highly counterintuitive. Moreover, if all who have moral status have it equally, then we should right now be very invested in the question of whether insects are sentient. If they are, then we are routinely harming trillions of beings with full, equal moral status—a very serious matter. The commonsense reaction that we need not be so concerned with the question of whether insects are sentient suggests that, if they are, their moral status is less than ours, implying that not all who have moral status have it equally.

Dualist confusion

On the flip side, a common bias in favor of equality is dualist confusion about consciousness. If consciousness is a binary property, which you can't see or weigh or reduce to component parts, it's natural to assume that it's equal for all brains that have it. When we instead internalize the physicalist understanding of consciousness as a collection of computations, the idea of treating all conscious minds as equal is almost obviously untenable, because the minds may be very different in construction, function, and complexity. The question is then not whether brain size matters but whether it matters a little or a lot.

Arguments for brain-size weighting

Why might we think that bigger brains have more ethical importance? This section reviews a few of the common arguments.

Dominance argument

Robert Wiblin proposed the following argument. Imagine a Mouse. Then imagine a Mouse+ that has the same brain as the Mouse but with lots of extra machinery. It can do everything the Mouse could and then a lot more. Perhaps the extra features of the mind should count for more than nothing.

I call this a "dominance argument" in analogy with the concept of strategic dominance in game theory. For example, when comparing strategies A and B, saying that "B weakly dominates A" means "There is at least one set of opponents' action for which B is superior, and all other sets of opponents' actions give B the same payoff as A." In Wiblin's argument, we might say that Mouse+ weakly dominates Mouse because there is at least one cognitive ability for which Mouse+ is superior, and Mouse+ is at least equally good as Mouse with respect to all other cognitive abilities.

The concept of "consciousness" is complex

In my view, consciousness is not an ontologically basic property of the universe but is rather a concept that we attribute to physical systems. This concept is complex and includes many components, such as reactivity, learning, memory, self-modeling, and so on. More complex brains will generally have more of the attributes that fall under our concept of consciousness, and these attributes may be present to a more pronounced degree.

For example, while many animals probably have some degree of self-awareness, animals who can pass a form of the mirror test (which are often animals with large and complex brains) plausibly have more self-awareness than those who can't, on average. Hence, mirror-test passers can be said to match the concept of "consciousness" to a somewhat higher degree.

Another way to explain this point could be to imagine a formula for a mind's degree_of_consciousness, which is a sum of various abilities, like

degree_of_consciousness = learning_ability + memory_capacity + information_integration + ... + higher_order_thoughts.

A more complex brain has larger values for the summands in this expression, so the sum is also larger.

What if you think that consciousness is crisply defined? For example, suppose you think that a specific cognitive ability X or a specific computational pattern Y is crucial for consciousness. A more complex brain will tend to have more cognitive abilities, and a larger brain will tend to carry out more total computational processing. So other things being equal, a more complex or larger brain is more likely to contain the crucial element X or Y. On this view, the degree_of_consciousness of a mind would be just

degree_of_consciousness = 1 if X or Y is present, 0 otherwise.

A more complex or larger mind is more likely to have X or Y present. (Of course, this presumption could be overruled upon learning more about the specific brains in question; there might be some simple/small brains that contain X or Y and complex/large brains that don't.)

Insulator thought experiment

In "Quantity of experience: brain-duplication and degrees of consciousness," Nick Bostrom discusses a thought experiment involving the insertion of insulators through conducting wires. Given a digital brain, suppose you innocently slide an electrical insulator down the middle of its wires, to the point that current now doesn't cross the gap. Have you thereby doubled the amount of subjective experience? We might say no, because no big change has happened. But if not, then each sub-brain must be worth half as much as the original, which means the bigger brain must have been worth twice as much, i.e., its worth was proportional to its size.

Bostrom actually disagrees with this view. He thinks insertion of the insulator is the crucial step in making one mind become two, because after the insulator is inserted, the two halves become "counterfactually unlinked." In this case, the size of the wires is not relevant. I may agree with Bostrom here: Size of the wires is not relevant, or at least not very relevant.

Almond (2003-2007) considers a similar thought experiment and argues that the thickness of a computer's wires does affect the moral value of a mind running on it: "All else being equal, it seems that the greater the degree of redundancy in the substrate, the greater the value that we should assign to that substrate."

More bandwidth

In "Global Workspace Dynamics: Cortical 'Binding and Propagation' Enables Conscious Contents," the authors note the importance of high bandwidth for the "any-to-many" broadcasting that's thought to correspond to consciousness:

Notice that "any-to-many" signaling does not apply to the cerebellum, which lacks parallel-interactive connectivity, or to the basal ganglia, spinal cord, or peripheral ganglia. Crick and Koch have suggested that the claustrum may function as a [global workspace] GW underlying consciousness. However, the claustrum, amydgala, and other highly connected anatomical hubs seem to lack the high spatiotopic bandwidth of the major sensory and motor interfaces, as shown by the very high-resolution of minimal conscious stimuli in the major modalities. [...] The massive anatomy and physiology of cortex can presumably support this kind of parallel-interactive bandwidth. Whether structures like the claustrum have that kind of bandwidth is doubtful. The sheer size and massive connectivity of the [cortico-thalamic] C-T system suggests the necessary signaling bandwidth for a human being to see a single near-threshold star on a dark night.

Perhaps small amounts of bandwidth correspond to lower degrees, or at least lower sensory resolutions, of conscious experience. On the other hand, insofar as the global-workspace model depicts consciousness as power held by some neuronal coalitions, we might apply per-brain normalization (since power is always relative to how strong your competitors are), and in this case, we wouldn't necessarily scale our assessment of consciousness with brain size.

More parallel operations

Bigger brains do more parallel operations. Vallentyne (2005), p. 406:

The typical human capacity for pain and pleasure is no less than that of mice, and presumably much greater, since we have, it seems plausible, more of the relevant sorts of neurons, neurotransmitters, receptors, etc.

For instance, humans have about 103 times as many neurons as rodents, and hedonic hotspots in humans are on the order of a cubic centimeter, compared with a cubic millimeter in rodents, which is also a difference on the order of 103.

Also, the hemispheres of split-brain patients can operate independently, demonstrating that the halves are not so interconnected as to fail without communication.

If we attribute moral importance to a collection of various cognitive processes, then if we figuratively squint at the situation, we can see that in a bigger animal, there's some sense in which the cognitive processes "happen more" than in smaller animals, even if all that's being added are additional sensory detail, muscle-fiber contractions, etc., rather than qualitatively new abilities.

Degrees of consciousness within a mind

In her lecture on "The Neuroscience of Consciousness," Susan Greenfield suggests that consciousness comes in degrees even in humans. For instance, there are technologies to measure the depth of anesthesia. Sleep, drug use, partying, mental disorders, and other altered states of consciousness seem to involve different degrees of awareness and phenomenal depth. (For instance, I remember being shot in one of my dreams, and it hurt a fair amount but not nearly as much as it would have in real life.) Non-REM sleep—the "least conscious" states that we experience most regularly—involves appreciably different EEG patterns from awake states and dreaming REM sleep.

Greenfield suggests that we think of consciousness not like a light that's on or off but like a dimmer switch. She proposes a model in which neuronal assemblies broadcast consciousness through a brain, similar to a stone generating ripples in a pond. The ripple becomes more conscious when the stone (input stimulus) is bigger, thrown harder, and has a balance of modulatory chemicals that helps it expand. The size of these conscious neuronal assemblies is reduced in a continuous manner with anesthesia. They're also smaller in childhood, schizophrenia, sleep, and so on. However, Greenfield notes that pleasurable activities where you lose your sense of self (including arousal, drug use, etc.) yield smaller neuronal assemblies in cortical regions, and yet these are some of the most emotionally intense states, so we should be cautious about conflating Greenfield's notion of "consciousness" with "degree of emotional intensity." Greenfield notes that animals are probably less cognitively conscious than humans but clearly live in a world of rich emotion.

Other moral values plausibly scale with complexity

Imagine that you don't care at all about conscious experiences but instead place intrinsic moral value on helping companies grow and prosper. It seems plausible that someone with this moral framework should care more about the success of General Electric than about the success of a two-person startup company, because General Electric is larger and has more going on in it. In a sense, General Electric is "more of a company" than a tiny startup is.

Alternatively, imagine that you place intrinsic value on art. It seems plausible that a very large and detailed painting has more moral importance than a small, simpler painting. Indeed, we could imagine cutting up the large painting into four pieces, and it would seem odd if merely doing that would multiply the moral value of that painting by four times.

Of course, consciousness is not the same as corporate success or art, but perhaps similar intuitions translate to the case of consciousness.

Arguments for equality weighting

I feel the size-weighting view fails to capture holistic features of a mind that are morally relevant. Here are some intuition pumps for equality weighting.

Argument from marginal cases

The argument from marginal cases is typically applied against speciesism, but it also has some force against brain-size weighting. Men have slightly bigger brains on average than women, and there are other differences in brain size and constitution among humans. If more complex brains matter more, then do people with permanent intellectual disabilities matter less? If not, why not?

A defender of complexity-weighting might point out that we have good instrumental reasons to maintain that "all people are equal" as a legal and moral principle to forestall social animosity and invidious distinctions. People are close enough that we shouldn't amplify the subtle differences, especially since the idea that some humans are superior to others has been misused to cause immense suffering in the past. Cognitive differences among members of a single species could be seen as a topic that's politically incorrect to discuss. Both sides of the debate agree we shouldn't place moral weight on brain-complexity differences among humans; the question is just whether this is viewed as an instrumental or intrinsic stance.

But does intelligence or cognitive ability matter? Sometimes people laugh at insects for being so stupid, such as when moths try to fly toward a light incessantly. "How could something so stupid be morally important?" goes the thinking. Yet what about humans in a similar situation? Consider a depressed person who could, if she were smart enough, go to a psychiatrist and ask for exactly the right medication to remove her depression. "How could she be so stupid not to do that?" we might ask. To a superintelligence, it would seem straightforward what to do in this situation, but it's not to the person herself. Why should we treat insects differently? Or are we okay with superintelligences not caring about us despite our limited insight into how to solve our problems?

And what about raw size? It matters intuitively to us, but sometimes these intuitions go astray. Some military-drone operators refer to civilian casualties as "bug splats" because the people killed appear as small as bugs. The campaign "NotABugSplat" aims to counter this tendency. Likewise, when we see insects from close up, we start to empathize with them more. They start to feel more "real" to us. But whether we happen to see something from far away or close up isn't morally relevant. Hence, maybe size matters less than we're disposed to believe. In response, an advocate for size weighting would agree that we're intuitively biased based on visual size but then go on to emphasize that bigger brains have many small, bug-sized components, so they have to add up to more, in a similar way as a nation has to add up to more than a single person.

Legal precedent

Consider the following science-fiction scenario. A mad scientist cuts open your brain, pulls out half of it, and sticks that half into a previously dead human body. The scientist rewires both of your brains so that the two human organisms can now live and operate in the world (even if they don't have the same degree of intelligence or ability as you formerly had). With the scientist's help, the brains rewire themselves to adapt to having only half as many neurons.

In this scenario, the two people at the end of the mad scientist's experiment would have twice the legal importance as the original person: They'd get twice as many votes (assuming they weren't "mentally incapacitated"), twice as many government-assistance payments, etc. Moreover, I suspect most people would agree the two people deserve more moral concern than the original one person, even if perhaps not exactly twice as much moral concern.

Feeling of mental unity

We have a perception (or, some say, illusion) of mental unity. For instance, when our brain takes in signals of related emotions that are in conflict, it often feels as though the emotions "cancel each other out" before we perceive the final number; we don't perceive all the individual parts together. Maybe the actual combination was 3 + 1 - 4 + 2, but we're only conscious of the sum total, 2. Or is it an illusion of the unified self to think we're only conscious of the final sum total? It may depend partly on where you draw the line between conscious perceptions and unconscious operations / aggregations.

Signal strengths are relative, not absolute

It may be that the amount of neural tissue that responds when I stub my toe is more than the amount that responds when a fruit fly is shocked to death. (I don't know if this is true, but I have almost a million times more neurons than a fruit fly.) However, the toe stubbing is basically trivial to me, but to the fruit fly, the pain that it endures before death comes is the most overwhelming thing in the world. There may be a unified quality to the fruit fly's experience that isn't captured merely by number of neurons, or even a more sophisticated notion of computational horsepower of the brain, although this depends on the degree of parallelism in self-perception.

As another example, imagine the following:

Harry and Sam

Harry the Human is doing his morning jumping jacks, while listening to music. Suddenly he feels a pain in his knee. The pain comes from nociceptive firings of 500 afferent neurons. At the same time, Harry is enjoying his music and the chemicals that exercise is releasing into his body, so his brain simultaneously generates 500 "I like this" messages. Harry is unsure whether to keep exercising. But after a minute, the nociceptive firings decrease to 50 neurons, so he decides his knee doesn't really hurt anymore. He continues his jumping routine.

Meanwhile, Sam the Snail is sexually aroused by an object and is moving toward it. His nervous system generates 5 "I like this" messages. But when he reaches the object, an experimenter applies a shock that generates 50 "ouch" messages. This is the same as the number of "ouch" messages that Harry felt from his knee at the end of the previous example, yet in this case, because the comparison is against only 5 "I like this" messages, Sam wastes no time in recoiling from the object.

Now, we can still debate whether the moral significance of 50 of Harry's and Sam's "ouch" messages are equal, but to the organism himself, they're like night and day. Sam hated the shock much more than Harry hated his minor knee pain. Sam might describe his experience as one of his most painful in recent memory; Harry might describe his as "something I barely noticed."a

In reply to this, Carl Shulman observed:

Harry could still make choices (eat this food or not, go here or there) if the intensity of his various pleasures and pains were dialed down by a factor of 10. The main behavioral disruption would be the loss of gradations (some levels of relative importance would have to be dropped or merged, e.g. the smallest pains and pleasures dropping to imperceptibility/non-existence).

But he would be able to remember the more intense experiences when [in the past] he [had] got[ten] 10x the signals and say that those were stronger, richer, more intense, more morally noteworthy.

Depending on how Harry's brain encodes past emotional events, it might indeed be the case that if Harry's pleasures and pains were weakened by 10 times, he would still recall the past experiences as having been stronger. But the comparison is still relative to Harry's starting point. If Sam's pleasures and pains were reduced in intensity by 10 times, Sam too would report that his past experiences had been stronger.

Reductio against equality? Binary utility function

The example of Sam the Snail suggested looking at organisms from the agent's

eye view. Each individual has its own utility function (at least in a crude sense, if not a literal, consistent von Neumann-Morgenstern utility function), and what matters to the individual is how well or badly it's doing relative to the range of possible experiences it can have, not the absolute mass of pain signals it receives.

However, this view could be taken to an extreme. Giego Caleiro referenced Daniel Dewey as having made the following point:

if the simplicity of the notional world an animal brain/mind/computer has decreases, it seems possible that each computation is more "intense" in that it represents a larger subset of the whole of possible spaces that mind can be in. So a simple pain not-pain system would experience cosmic amounts of pain. Whereas a complex intricate system, like us, would experience pain as a small subset of its processing.

Here's a potential real-world example:

In computer architecture, a branch predictor is a digital circuit that tries to guess which way a branch (e.g. an if-then-else structure) will go before this is known for sure. The purpose of the branch predictor is to improve the flow in the instruction pipeline. Branch predictors play a critical role in achieving high effective performance in many modern pipelined microprocessor architectures such as x86.

A branch predictor can be seen as an extremely simple "agent" whose "goal" is to ensure that the correct branch of instructions is fetched. The agent can either succeed or fail at this goal, and failure (which does happen occasionally) is in some sense "the worst possible outcome" relative to the agent's goals.b Yet a failed prediction by a branch predictor seems morally trivial compared with instantiation of (nearly) the worst possible outcome for a more complex agent, such as a zebra being shredded to pieces while conscious.

One solution is to only consider agents with at least a minimum level of brain complexity and then apply equality weighting among them. However, this seems intuitively less attractive than using a continuous weighting function, since otherwise we'd encounter absurd edge cases on the boundary of inclusion.

Big brains are not just many copies of little brains

One of the strongest arguments why size should matter is that big brains may contain lots of subcomponents, each of which could be seen as similar to a little brain. But it's not clear this is actually true, because little brains are whole units, while big brains have more specialization in their subcomponents.

In "Can neuroscience reveal the true nature of consciousness?," Victor A.F. Lamme suggests the hypothesis that consciousness happens when a neural network becomes recurrent, i.e., feeds back on itself in a loop. He presents evidence for this in the case of the visual system. Obviously this is an oversimplified picture, but we can use it for illustration. A big mind with a recurrent loop we could picture as a big circle, and a small mind with a recurrent loop we could picture as a small circle. If we say that being a circle of some sort is the crucial feature of consciousness, then we'd treat these two minds equally. If we think circle size also matters, we wouldn't treat them equally. Of course, in practice, this imagery is vastly oversimplified, and in reality maybe the big mind would look like a big circle with lots of little circles hanging off it and lots of other connections. Still, probably a mind N times as big as another doesn't have N times as many circles?c

Depictions of a big recurrent brain, a small recurrent brain, and slightly more accurate renditions of them.

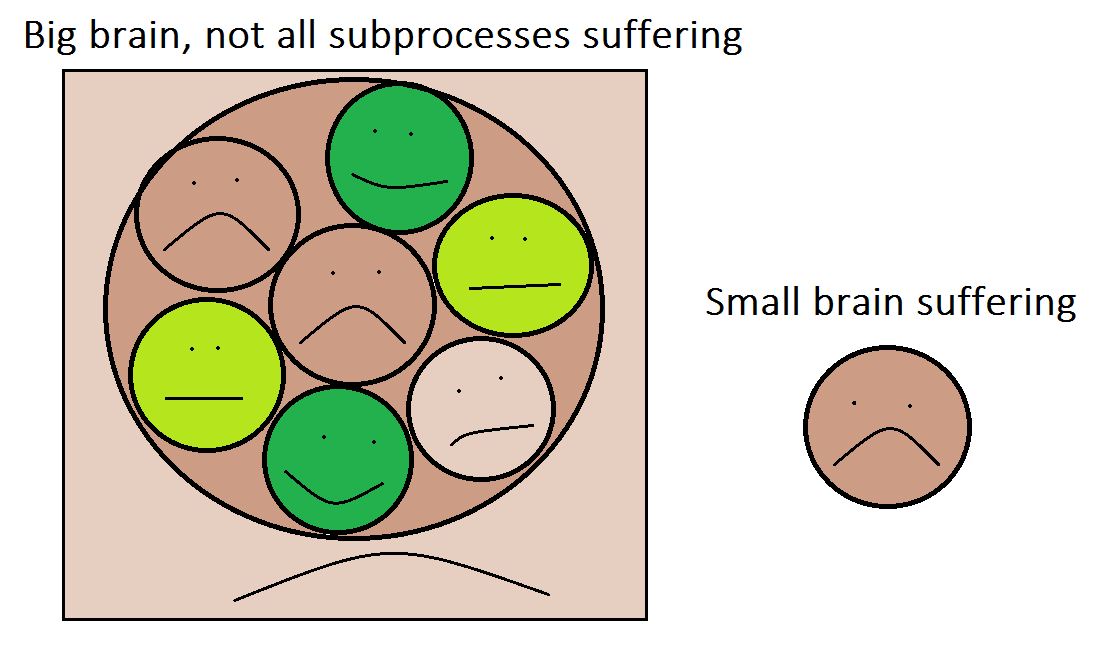

Depictions of a big recurrent brain, a small recurrent brain, and slightly more accurate renditions of them.More importantly, the high-level brain systems may not be very correlated with lower-level systems. Suppose for the sake of argument that a rat brain contains subroutines that can be seen as equivalent to 1000 insect brains. If the rat injures itself, its high-level brain systems respond with suffering, but this doesn't mean all of its subsystems are suffering. Many of those subcomponents may continue blindly passing along information just like they always did. In contrast, an insect brain of the same size as one of the rat's small subroutines would engage aversive processing. This idea is illustrated in the following figure. The point is that even if a big brain has 1000 times the pure sentience of a smaller one, it plausibly suffers less than 1000 times as much as a smaller brain in response to any given injury.

To drive home the point further, following are stylized code snippets for a small brain suffering and then a large brain suffering. The basic idea is that the large brain has much more complex machinery to carry out a similar task of detecting injury and responding with negative reinforcement, but it's not clear that all the information-computation subroutines that go into the overall suffering process should themselves count as suffering on their own.

Simple brain:

degreesAboveTemperatureThreshold = 10 tissueDamage = 0 hunger = 2 suffering = 5 * degreesAboveTemperatureThreshold + 10 * tissueDamage + hunger reinforcement =- suffering

Complex brain:

degreesAboveTemperatureThreshold_sensor1 = 8 degreesAboveTemperatureThreshold_sensor2 = 11 degreesAboveTemperatureThreshold_sensor3 = 7 temp1_averageDegreesAboveTemperatureThreshold = 0.3 * degreesAboveTemperatureThreshold_sensor1 + 0.42 * degreesAboveTemperatureThreshold_sensor2 + 0.28 * degreesAboveTemperatureThreshold_sensor3 temp2_averageDegreesAboveTemperatureThreshold = randomTransmissionNoise(0.95, 1.05) * temp1_averageDegreesAboveTemperatureThreshold localMotorReinforcement -= 0.2 * temp2_averageDegreesAboveTemperatureThreshold temp3_averageDegreesAboveTemperatureThreshold = randomTransmissionNoise(0.95, 1.05) * temp2_averageDegreesAboveTemperatureThreshold tissueDamage_sensor1 = 0 tissueDamage_sensor2 = 0 averageTissueDamage = 0.48 * tissueDamage_sensor1 + 0.52 * tissueDamage_sensor2 combinedPhysicalDamage = temp3_averageDegreesAboveTemperatureThreshold + 1.8 * averageTissueDamage sendToMemories(combinedPhysicalDamage) speak(sendToVerbalCenters(combinedPhysicalDamage)) hunger = 0.2 * averageLevelOfHormone1() - 0.1 * averageLevelOfHormone2() + 0.5 * cognitiveAssessment() - 0.05 * computeStress() localFeedingMotorReinforcement -= 2 * hunger suffering = combinedPhysicalDamage + 0.2 * hunger suffering *= adjustmentFactorFromHigherCognition(combinedPhysicalDamage, hunger, serotoninConcenration, memories) highLevelReinforcement -= suffering

Suffering from a given injury vs. lifetime suffering

It's important to distinguish

- the moral weight of a given injury (e.g., punching a person in the face), versus

- the moral weight of an animal's existence compared against non-existence.

I think an animal's moral weight scales closer to linearly in brain size for #2 than for #1, as I'll explain now.

As an extreme simplification, we can picture a human brain of ~1011 neurons as being composed of 1 million "insects" that each have 105 neurons. At any given time, different "insects" (subcomponents of the human brain) are doing different things. Some of them are signaling danger; others are suppressing the danger signals; some signals are growing in strength; others are getting weaker. While not as autonomous as a literal insect would be, these brain subcomponents can still be seen as having their preferences fulfilled or frustrated to varying degrees. Thus, at any given time, some fraction of the "insects" in the human brain are suffering or having their preferences frustrated. Suppose that over the long run, a fraction F of the brain's "insects" are suffering. For example, if F is 1/10, then an average of 100,000 "insects" suffer at any given instant over the long run. Now suppose the person gets punched in the face. This causes significant suffering as viewed from the high-level, aggregate behavior of the brain. However, not all the subcomponents of the brain suffer due to this event; suppose that only, say, 200,000 of the brain's "insects" suffer. Then the increase in "insect" suffering, over baseline levels, caused by the punch is only 200,000 (the number suffering from the punch) minus 100,000 (the average number that ordinarily suffer), which is 100,000. Thus, in a brain of 1 million "insects", the punch only caused an extra 100,000 "insects" to suffer, which suggests that the increase in "insect" suffering due to injury scales as (number of "insects")5/6, i.e., less than linearly in brain size.

Now let's compare 10 minutes of the person's brain with 10 minutes of a literal insect. Suppose that the literal insect suffers for 1/10 of those 10 minutes, which is 1 insect-minute of suffering. Meanwhile, the person's brain contains an average of 100,000 suffering "insects" per minute, which implies 1 million "insect"-minutes of suffering over the whole 10 minutes. In this case, the human brain, which contains 1 million "insects", suffers 1 million times more than the literal insect over the 10-minute period, i.e., the lifetime suffering caused by creating the organism scales linearly in brain size.

Obviously these examples are contrived, and different conclusions would result from different input assumptions, but hopefully I've illustrated the basic idea. I care more about a literal insect than a subroutine of the human brain of the same number of neurons because the literal insect seems more autonomous, has less neural redundancy, has more independent abilities, and so on, so the example above shouldn't be taken too seriously.

Beauty-driven intuitions

When we better understand how brains work, we see that there's no "natural" weighting of moral value. There's not some magical essence of emotional experience contained within a brain that can be neatly counted, in the way that we might count dollars in a bank account. Rather, there are components influencing other components, which influence other components, and the valuation is something we need to impose. Of course, the brain does operate based on numbers: How many neurons are firing at what rate to signal different messages. But these are local, specific operations that by themselves don't matter much; the larger, non-quantitative context makes them relevant.

It feels somewhat elegant to take neural systems as important and then suppose that bigger, more complex ones are more important. This approach seems aesthetically pleasing. But fundamentally there is nothing compelling this choice, just as there's nothing compelling us to start the alphabet with the letter "A" instead of the letter "P". It's just a bias we hold out of many possibilities. But it seems like ethics should be about what matters to other organisms, not about what we personally find beautiful?

Giving more weight to bigger brains embodies a general principle of favoring things that are powerful and influential over those that are weak and less noticed. Maybe we don't feel this is right and that the "little guy" should count more. Of course, then we run into questions about which little guys to favor, since there are so many of them.

Mind boundaries are not always clear

In the "Quantity of experience" paper mentioned above, Bostrom goes on to discuss cases of minds that are neither wholly unified nor wholly separated. For instance, if only a fraction of the logic gates of the digital minds in his example have had insulators slipped through them, then parts of the computation are shared and parts are distinct. In the limit that no logic gates are separated, we have one mind, and in the limit that they're all separated, we have two. In between, we seem to have ... 1.5? (At least, this is my interpretation, not necessarily Bostrom's.)

Even without going into toy computational scenarios, we can see concrete examples of this question in familiar contexts:

- What are we to make of twins whose heads are conjoined?

- Of turtles with two heads?

- How about you and your iPhone?

- Is the Trinity one god or three?

- What about ants? Are they separate or part of a single hive mind? The ants in a colony are mostly sisters, sharing some common DNA from the queen. Even though they're not physically bound together, they're working toward a common goal, just like the cells in a single organism's body.

- But if we're willing to group together organisms because they work together due to shared DNA, what are we to make of you and your siblings? If you have two brothers, are you and them collectively less than three people?

- For that matter, how about you and friends with whom you don't share genetic relation? Like any other part of your brain, they influence your behavior, store memories for you to retrieve, and give you information about your surroundings. Fields like relational sociology expound upon the sense in which individuals are not purely individuated. In psychology, the perspective of seeing a collection as being a whole is called entitativity.

- What about slime molds? When food is scarce, they bunch together like one big organism, but when they find food, they split apart. John Bonner: "[Slime molds are] no more than a bag of amoebae encased in a thin slime sheath. Yet they manage to have various behaviors that are equal to those of animals who possess muscles and nerves with ganglia—that is, simple brains."

- This page features a picture of Bryophyllum pinnatum producing new plantlets next to itself. The caption says: "The concept of 'individual' is obviously stretched by this asexual reproductive process."

- Godfrey-Smith (2016), p. 781: "multicellular organisms have vague boundaries with respect to which cells are parts of the organism and which are not. I think this is the right message to draw from recent work on symbioses between eukaryotic cells and their microbial partners".

- Discussing the arguments of Allen and Hoekstra (1992), Lockwood (1996) concludes (p. 5):

there is simply no compelling biological rationale for exclusively distinguishing ourselves (or other eucaryotes) as "individuals," given that we are comprised of a range of genetic material (including both nuclear and mitochondrial DNA), support a variety of living organisms (including our intestinal flora and follicular mites), depend on a network of conspecific organisms (including our parents and mates), and require an extensive interspecific system of relationships (including our food plants and domestic animals).

Brain-size weighting would help resolve the weirdness in these cases. An ant colony would collectively count as much as so many individual insects, and a pair of siblings would actually count double as much as an only child. Still, we're not forced to adopt brain-size weighting. We could also bite the bullet and admit that there's a fractional number of individuals in some cases, applying equality weighting to whatever number that is. Most of the time this wouldn't produce conclusions that we found too weird if we took the degree of connection among people and among ants to be sufficiently small. For instance, if physical contiguity of neural tissue played a big role in deciding how many minds were present, then all but the extreme thought experiments would have mostly common-sense resolutions.

Small brains matter more per neuron

Even if we don't buy full equality weighting for small minds, there are reasons to give small brains more weight per neuron than large brains.

Suppose certain insects run algorithms that self-model their own reaction to pain signals in a similar way as how this happens in humans. (See, for instance, pp. 77-81 of Jeffrey A. Lockwood, "The Moral Standing of Insects and the Ethics of Extinction" for a summary of evidence suggesting that some insects may be conscious.) If we do weight by brain size, given that bees have only 950,000 neurons while humans have 100 billion, should we count human suffering exactly 105,263 times as much as comparable bee suffering? Here are some reasons not to:

- The relevant figures should not be the number or mass of neurons in the whole brain but only in those parts of the brain that "run the relevant pieces of pain code." I would conjecture that human brains contain a lot more "executable code" corresponding to non-pain brain functions that bees lack than vice versa, so that the proportion of neurons involved in pain production in insects might be higher.

-

From "Encephalization quotient" on Wikipedia:

If Stegosaurus could survive with this tiny brain, it would seem that any animal with anything bigger must be using it for non-essential abilities. However, mammalian evolution has repeatedly improved the effectiveness of a bodily function by innervating it more; the digestive and immune systems are examples. Thus, while an elephant has a much larger brain than a Stegosaurus, a substantial part of the excess brain is bound up in bodily functions rather than cognitive functions.

- The kludgey human brain, presumably containing significant amounts of "legacy code," is probably a lot "bulkier" than the more highly optimized bee cognitive architecture. This is no doubt partly because evolution constrained bee brains to run on small amounts of hardware and with low power requirements, in contrast to what massive human brains can do, powered by an endothermic metabolism. Think of the design differences between an operating system for, say, a hearing aid versus a supercomputer. If we care about the number of instances of an algorithm that are run more than, e.g., the sheer number of CPU instructions executed, the difference between bees and humans shrinks further.

These points raise some important general questions: How much extra weight (if any) should we give to brains that contain lots of extra features that aren't used? For instance, if we cared about the number of hearing-aid audio-processing algorithms run, would it matter if the same high-level algorithm were executed on a device using the ADRO operating system versus a high-performance computer running Microsoft Vista? What about an algorithm that uses quicksort vs. one using bubblesort? Obviously these are just computer analogies to what are probably very different wetware operations in biological brains, but the underlying concepts remain.

Individual neurons in a big brain can be viewed more as "cogs in a machine" than individual neurons in smaller brains. Smaller brains are like startup companies, where any individual neuron can play a fairly significant role. In contrast, big brains are like large corporations, where there are lots of specialized departments (cognitive modules, e.g., face recognition) that each contain many neurons doing the same general task. It's plausible that any given neuron (employee) is more morally important in a smaller brain because it plays a bigger role in the success of its brain (company).

If what matters most about a brain is its qualitative complexity in terms of behavioral repertoire, number of different "subroutines", and so on, then it seems plausible that this qualitative complexity scales less than linearly in number of neurons. For example:

the behavioral repertoire[s] of invertebrates such as insects sometimes surpass [those] of mammals such as moose and monkeys. The number of different distinct behaviors have been counted in several dozen species. What counts as one distinct behavior? For example, among honeybees, one behavior is “corpse removal: removal of dead bees from the hive,” and another example of a behavior is “biting an intruder: intruders are sometimes not stung but bitten.” The number of different behaviors in different insect species range at least from 15 to 59, while “amongst mammals, North American moose were listed with 22, De Brazza monkeys with 44 and bottlenose dolphins [with] 123.” Honeybee workers are the insects with 59 behaviors, which surpasses at least that of moose and De Brazza monkeys.

In "Are Bigger Brains Better?," Chittka and Niven explain:

Neural network analyses show that cognitive features found in insects, such as numerosity, attention and categorisation-like processes, may require only very limited neuron numbers. Thus, brain size may have less of a relationship with behavioural repertoire and cognitive capacity than generally assumed, prompting the question of what large brains are for. Larger brains are, at least partly, a consequence of larger neurons that are necessary in large animals due to basic biophysical constraints. They also contain greater replication of neuronal circuits, adding precision to sensory processes, detail to perception, more parallel processing and enlarged storage capacity. Yet, these advantages are unlikely to produce the qualitative shifts in behaviour that are often assumed to accompany increased brain size.

The fact that cognitive ability and intelligence scale sublinearly with brain size at least hints that degree of consciousness might too.

Bigger brain circuits may also imply more systemic noise, which requires even more neural redundancy. This again suggests sublinear efficiency with number of neurons.

Charles Darwin, The descent of man, and selection in relation to sex:

no one supposes that the intellect of any two animals or of any two men can be accurately gauged by the cubic contents of their skulls. It is certain that there may be extraordinary mental activity with an extremely small absolute mass of nervous matter: thus the wonderfully diversified instincts, mental powers, and affections of ants are generally known, yet their cerebral ganglia are not so large as the quarter of a small pin's head. Under this latter point of view, the brain of an ant is one of the most marvellous atoms of matter in the world, perhaps more marvellous than the brain of man.

And D. M. Broom, "Cognitive ability and sentience: Which aquatic animals should be protected?":

some animal species or individuals function very well with very small brains, or with a small cerebral cortex. The brain can compensate for lack of tissue or, to some extent, for loss of tissue. Some people who have little or no cerebral cortex have great intellectual ability.

An animal's number of neurons is often used for brain-size weightings because data for it are readily available. But linear neuron weighting doesn't seem right because smaller brains are more efficient and retain many impressive abilities with just a few neurons. One quick-and-dirty approximation could be to weight by nα, where n is number of neurons and 0 < α < 1. For example, a human has about 105 times more neurons than a cockroach, but intuitively I feel the human matters at most 102 times as much, so α = 2/5 seems plausible. Of course, this simple exponent approach is just an approximation because it ignores sizes of the hedonic centers specifically, as well as many other morally relevant attributes that vary from animal to animal.

"Number of neurons" seems like a better proxy for moral importance than "raw brain mass". For example, this study argues that birds are more intelligent than their brain sizes alone would predict because they have denser neurons: "large parrots and corvids have the same or greater forebrain neuron counts as monkeys with much larger brains. Avian brains thus have the potential to provide much higher 'cognitive power' per unit mass than do mammalian brains."

Other measures besides number of neurons

Forum user EmbraceUnity suggests that one principle relevant to brain valuation might be Metcalfe's law: "the value of a telecommunications network is proportional to the square of the number of connected users of the system". If we picture the brain as a directed graph of neurons that can connect to any neuron (including themselves), then the number of possible edges in this directed graph is the square of the number of nodes, i.e., the square of the number of neurons. Of course, in real human brains, each neuron only "has on average 7,000 synaptic connections to other neurons." Also, if every neuron connected to every neuron with equal strength, then the connections would be meaningless, since information is coded in the brain by distinctions between neural activation in some subnetworks and not others.

my intuition is that what matters for consequentialism is the algorithm(s) being implemented by a mind. So sophisticated brains may be capable of quantitatively or qualitatively more suffering than primitive ones. But sophistication is not the same as size, and pain-relevant sophistication is not the same as general sophistication. I suspect that, as an extremely rough approximation, increases in the size of a neural net provide diminishing returns to the being's capacity for suffering. So although we humans are much smarter than other animals, we may not have dramatically greater capacity for (especially physical) suffering. Gun to my head, I think the best weighting option with the crude data available here is some increasing concave function of synapse count. I would not be surprised if that function leveled off substantially before reaching the level of fish or even insects, let alone chickens.

If we're counting synapses, do we count any directed connection between two neurons as one logical synapse? Or do we count literal, physical synapses? Two neurons may have more than one physical synapse between them. Also, the strength of a connection is determined not just by number of synapses but also by amount of neurotransmitter released, numbers of receptors per synapse, and so on. And of course, there are different types of neurotransmitters and different types of receptors.

Also, it seems like relative synapse strength among the input neurons may be relevant? For example, if a receiving neuron receives extremely strong inputs from neuron A but barely noticeable inputs from neurons B and C, it's almost as if the connections from B and C aren't present. In contrast, if the inputs from each of A, B, and C are about equally strong, then it's more reasonable to say that there are three input neurons rather than just effectively one. We might also want to consider how diverse the input neurons are; two input neurons both responding to the same stimulus may be less informationally rich than two input neurons that come from completely different brain regions.

More complex measures are possible, such as those of integrated information theory.

Maybe we want to focus on the number and size of neurons firing at any given moment. In this case, brain metabolism may be a reasonable metric, and this metric is not too hard to measure or allometrically estimate. Of course, a neuron that always fires conveys no more information than a neuron that never fires (except maybe based on the timing of firing), but a neuron that always fires uses far more energy than one that never fires. (However, presumably biological brains have evolved to keep needless neuron firing relatively low.)

Going to a higher level, we could assess the sentience of a brain based on how many "abilities" it has, for some measure of abilities. For example, we can count how many types of learning it can demonstrate—such as habituation, classical conditioning, operant conditioning, reversal learning, occasion setting, spatial learning, number-based learning, relational learning, and so on (Perry et al. 2013). Or how many types of distinct behaviors it has. Or how it scores on a cross-species intelligence test. And so on.

Smaller animals may have proportionally more brain metabolism

This section presents a theoretical argument why the metabolism of a brain may scale less than linearly in the mass of the brain. Brain metabolism seems like a better proxy for sentience than raw brain mass. Thus, even without applying any other arguments about how smaller brains use more efficient algorithms or have more agency per cognitive operation, we might think that the moral relevance of a brain scales less than linearly in brain mass.

Counting independent subcomponents?

One reason to care more about bigger brains is that they may contain many, roughly independent subcomponents that each on their own would be analogous to a small brain. (Whether this is actually true I'm not sure.) So, for example, if a bigger animal's brain contained 10 fairly disjoint components that each were the size of a smaller animal's brain, the bigger animal would count 10 times as much.

On this view, more parallel copies count more, but it's not clear if more complicated serial processing does. If one part of the brain computes 3 + 1 = 4 and makes it available to awareness, and another part computes -4 + 2 = -2 and makes it available to awareness, that seems like two conscious perceptions, but if the brain first takes the whole sum 3 + 1 + -4 + 2 = 2, that seems like just one.

Of course, it's arbitrary where we define the cutoff point to be between the "conscious" perceptions and the "unconscious" ones. Ultimately, all we have are neurons influencing other neurons influencing other neurons, and the hierarchical structure of moving "upward" in degree of awareness is something that we superimpose. Why do we think the lower layers of that tree-like structure aren't just as conscious as the top layer? For instance, suppose we had 100 people, recorded their brain activity, and then fed it into a big computer brain that aggregated it into a final "conscious" report. That wouldn't mean the 100 individuals weren't also conscious in a morally important way. Aggregation at a higher level doesn't render lower levels irrelevant.

Physical or algorithmic size?

Suppose we morally weight brains by some function of size—perhaps a nonlinear function that gives more weight to smaller brains than their relative number of neurons. There remains a question about what kind of size we're measuring. For animal brains, we could look within hedonic brain regions at mass, volume, number of neurons, or number of independent modules. Each of these would give different answers, but they'd be at least somewhat correlated.

However, for minds in general, the correlation could break down. How would we compare a Lego Turing machine against a nanoscale atomic machine performing the same operations? My intuition says they should be roughly equal, because they're executing the same algorithm, and if they were hooked up to a robotic interface, we would see the robot behaving identically in either case. On the other hand, if we think back to Bostrom's example with wires being split by insulators, in that scenario some people might feel that the sheer volume of wires was relevant, because bigger wires could be split into more smaller computers. Likewise, a Lego Turing machine could be atomically rearranged into at least millions of nanocomputers.

Still, the fact that matter could be arranged in some other way doesn't mean it has equivalent value when it's not so arranged. One might just as well say that a universe in which a tiny creature stubs its toe could be rearranged into googols of tiny creatures stubbing their toes, but this doesn't make the event astronomically awful. This distinction between what could be and what is seems important.

I think most people care about algorithmic size rather than physical size. But if so, this shows us that brain-size weightings can't be taken to extremes. One might say that a human matters more than a mouse because if we count by neurons rather than by individuated organisms, there are more components in the human brain. But why count by neurons rather than by atoms? And if we count by atoms, should we weigh a bulky, clumsy, inefficient brain more than a sleek one that can perform the same functions? Algorithmic size can't necessarily save us from this quandary, because we can imagine two brains that differ only in that one brain uses vastly less efficient algorithms. It doesn't seem right that the inefficient brain should count more in direct proportion to it's increased number of algorithmic steps. Fundamentally, comparing different brains is comparing apples and oranges, and attempts to establish a single common currency for exchange seem likely to yield counterintuitive cases.

Do "real" brains matter more than simulated?

In "Minds, brains, and programs", John Searle claims:

The idea that computer simulations could be the real thing ought to have seemed suspicious in the first place because the computer isn't confined to simulating mental operations, by any means. No one supposes that computer simulations of a five-alarm fire will burn the neighborhood down or that a computer simulation of a rainstorm will leave us all drenched.

In response, functionalist philosophers of mind have argued that the functional behavior, rather than physical construction, of a system is what matters for attributing mind to it. There's a functionalist sense in which a simulated rainstorm does leave things drenched: In particular, it leaves the simplified toy world of the computer simulation drenched, leading simulated characters to react in simple ways that adumbrally resemble how "real" people would react to a "real" rainstorm. And as the rainstorm simulation becomes more complex, the degree to which the simulation "actually does get drenched" in a functionalist sense increases.d

While I largely sympathize with the functionalists, I can see some merit in Searle's position as well. Even if you only care about functional behavior, there's a sense in which a flesh-and-blood human is more algorithmically complex than a computer-emulated human simulated only to the neuronal level of detail. The brain of the flesh-and-blood human contains vastly more complex physical processing in the form of neurotransmitter movements, ATP utilization, ion pumping, gene transcription, etc. Of course, a simulated brain also has its own share of complex physical processing in its electrical circuitry. But insofar as the computer emulation can (in the long run, not with present technology) be made to emulate a human using computing power made out of less physical matter than the amount of matter in a biological brain, a human brain being emulated by an advanced posthuman civilization would, in some sense, be vastly simpler than a biological brain, even from a functionalist standpoint—at least if we consider the functional behavior of cells and molecules to contribute nonzero ethical weight to a brain. How much more moral consideration a brain deserves merely in virtue of having more complex physical processing below the neuronal level of organization is up for debate. More embodied views of cognition are likely to feel that detailed simulations of bodies and external environments are required before a simulation counts as much as a non-simulated human.

Searle is right that a simulation is not the same as a biological brain. Once we realize that consciousness is not a "thing" that supervenes on physical systems, we can see that there's no ontologically fundamental sense in which a digital emulation of a brain is "phenomenally indistinguishable" from its corresponding biological brain, because there is no separate realm of ontologically basic phenomenality. A biological brain and an emulated copy of it are just two different physical processes that share morally important algorithmic similarity. It seems plausible that both deserve significant moral weight, but it's not wrong to continue giving them unequal moral weight.

Intuitions about a China brain

Suppose we had a China brain scenario in which a billion of individually conscious people performed actions corresponding to a country-sized conscious agent. Would we say that the China brain's experiences were vastly more ethically important than those of any individual human? This can be a helpful testing ground for our intuitions about brain size insofar as people don't have a bias against caring a lot about the individual humans in the same way that they may be biased against caring a lot about individual insects.

One response is to advance an "anti-nesting" principle (see section 2 of Eric Schwitzgebel's "If Materialism Is True, the United States Is Probably Conscious" for a discussion): Because the individual members of the Chinese population are conscious, the China brain cannot be. This seems not right, for various reasons that Schwitzgebel elaborates. In addition, it seems like a billion Chinese citizens forming a nationwide brain is ethically different from a billion of them acting in a way that doesn't constitute a brain. Remember, there's no physical spookiness here: "Conscious" is just a label we apply when a situation pattern-matches against ideas we have about what "conscious" means. There's no reason there can't be multiple layers of systems all of which we call conscious.

So how much would the China brain matter compared with an individual human? One tempting answer is to say a China brain composed of 1 billion people matters 1 billion times as much as a person, but this isn't right, because the sum of value of each of the smaller brains is counted separately in our ethical balance sheet from the value of the big brain, since the minds are not the same. Another tempting answer is to say that the big brain matters as much as any single small human brain, assuming the functions it performs are roughly equivalent. If the number of top-level aggregations in the big brain is the same as in a small human brain, then indeed it seems like the two are not morally different (per unit clock speed). Why should the size of the materials from which it's constructed matter?

This is a tough question, and fundamentally there's no right answer. The human mind is a messy jumble of intuitions that can fire at various times in response to various ways of looking at something, and what these thought experiments do is just trigger certain reactions based on how they're constructed. The more one learns about this field and reads the literature, the more one's brain is rewired to respond to philosophical thought experiments.

Finally, I should point out some ways in which the China-brain scenario is not like the humans-vs.-insects debate. If the China brain is assumed to perform the same functions as a human brain, then the only thing that makes the China brain special is its raw size. In contrast, humans have many more neurons and more functions than insects, while the neurons themselves are not vastly different in size. Also, the ratios of neuron counts are not the same. For instance, a cockroach has roughly 106 neurons, against 8.5 * 1010 for humans. Meanwhile, if we assume that each human in the China brain corresponds to one neuron, then the China brain has 109 neurons.

Value pluralism

Both the size-weighting and equality-weighting positions have compelling arguments. In the end, I might adopt a stance of "value pluralism" on the question, in which I care about both brain size and individual agenthood to some degree. I explain more details in this section of another essay.

"Unequal Consideration" vs. "Unequal Interests"

DeGrazia (2008) distinguishes two frameworks in which non-human animals might have less moral status than humans. In the Unequal Consideration Model of Degrees of Moral Status (p. 186), humans are seen to warrant more moral weight than other animals even for equal interests. For example, suppose that hitting a human and a racoon would both cause suffering of -5 units, and ignore indirect effects. The Unequal Consideration approach would say it's worse to hit the human (pp. 187-88).

Meanwhile, the Unequal Interests Model of Degrees of Moral Status says that humans often matter more because they often have stronger interests (p. 188). For example, hitting a human might cause -10 units of suffering, while hitting the raccoon causes only -5. Even giving suffering equal weight regardless of species, we judge hitting the human to be worse.

DeGrazia (2008) says "the difference between the two models of degrees of moral status is meaningful and very important in certain contexts" (p. 188).

If we think of suffering as measurable in some clear way, then I agree that there's a real distinction between these two models. Here's an unrealistic example to make the situation concrete. Suppose we identify some unambiguous cluster of neurons in both human and raccoon brains that we designate as "pain neurons", and suppose we measure suffering as the sum total number of times that such pain neurons fire. Then we'll likely have a situation of unequal interests, because presumably raccoons have fewer pain neurons. If we applied any further bias on behalf of humans beyond the differences in pain-neuron firing, we would be giving unequal consideration.

However, my own view is that "suffering" is a much more slippery, holistic concept than "number of pain-neuron firings", and simple metrics to assess it are unlikely to capture the full nuance we want the concept to embody. Ultimately, I think suffering is something that we should assess based on a combination of various objective metrics and our own subjective moral impulses. If we take this view, then the difference between the Unequal Consideration and Unequal Interests models blurs, because part of what it means to say that a human experiences more suffering (i.e., that there are unequal interests) is that we care more about or give more moral weight to the human (i.e., unequal consideration). If the "suffering" numbers that we assign to the human and the raccoon reflect our holistic moral judgments, then those numbers already bake in any unequal consideration that we might want to apply to the situation.

DeGrazia (2008) himself seems to consider possible differences in sentience between animals to fall under the Unequal Consideration framework (p. 193). Does this mean that he's assuming that Unequal Interests adherents use some measure of suffering that's independent of degrees of sentience and that Unequal Consideration adherents then apply sentience weighting on top of that measure? For example, this could be true if Unequal Interests adherents normalize the utility of all agents to the same scale (say, between 0 and 1), and Unequal Consideration adherents apply sentience weighting to those normalized utilities.

Acknowledgments

This piece has benefited from exchanges with Carl Shulman, although he doesn't necessarily endorse the views expressed here. See also "Sentience and brain size" on Felicifia.

Footnotes

- Jeffrey Lins asked whether it's empirically true that Sam would trade away almost anything to avoid shock. Here's my reply.

Insects at least escape from and learn to avoid electric shock, and crabs have been shown to more directly make motivational tradeoffs to avoid shock (source).

It's tricky to prove a claim like "would trade away almost anything" in the insect case because insects probably don't have enough ability to conceive of their options to meaningfully make tradeoffs across a large swath of potential outcomes. Maybe one could shock insects engaged in mating and see if they disperse as a reasonable (though inhumane) experimental setup.

It's clear to us that a sufficiently intense shock is worse for an insect's evolutionary fitness than any comparable gain (if the insect is killed or otherwise permanently prevented from mating by the event), so it's not implausible that insect nervous systems would represent a shock with a comparable level of seriousness. In any case, we could weaken the claim to say that insects would trade away nontrivial goods to avoid shock, while humans generally wouldn't give up even, say, a single piece of cake to avoid a dust speck. (back)

- Another interpretation of the agent's goal would be that its utility function is -1 times the amount of delay (measured in milliseconds) that results from the success or failure of the agent's prediction. In this case, the worst possible outcome (an infinite delay, perhaps resulting from failure of the computer before the correct branch can be fetched) would be more rare. (back)

- Of course, it depends how we're counting. For instance, the number of two-way connections in a brain might be more than linear in the number of neurons, in light of Metcalfe's law. Two neurons can only form one loop (back and forth between them), while three neurons can form four (three two-neuron loops and one loop around all three neurons). (back)

- In response to an article claiming that "a simulated apple cannot feed anybody", Aki Laukkanen replies: "A simulated apple can feed a simulated human. Duh." (back)